Readme

Depth Anything V3 — Metric Large

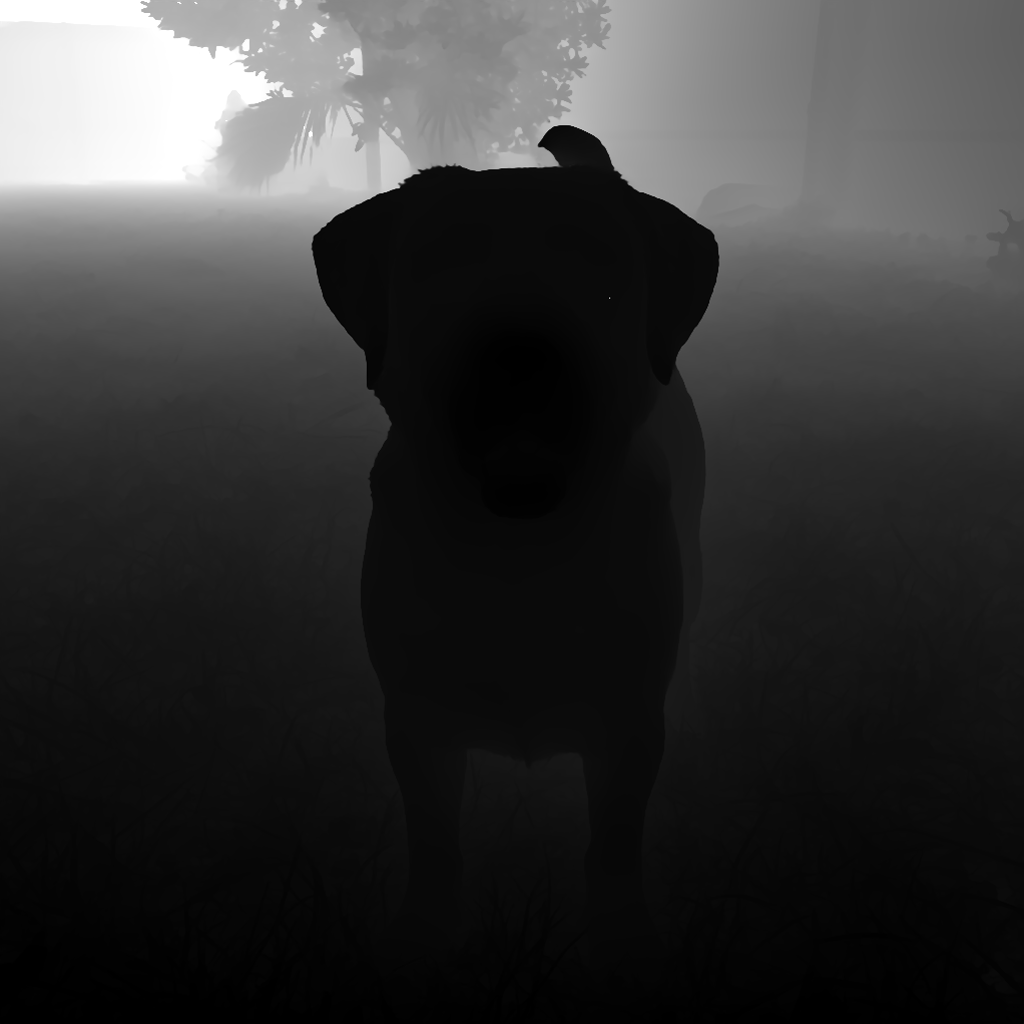

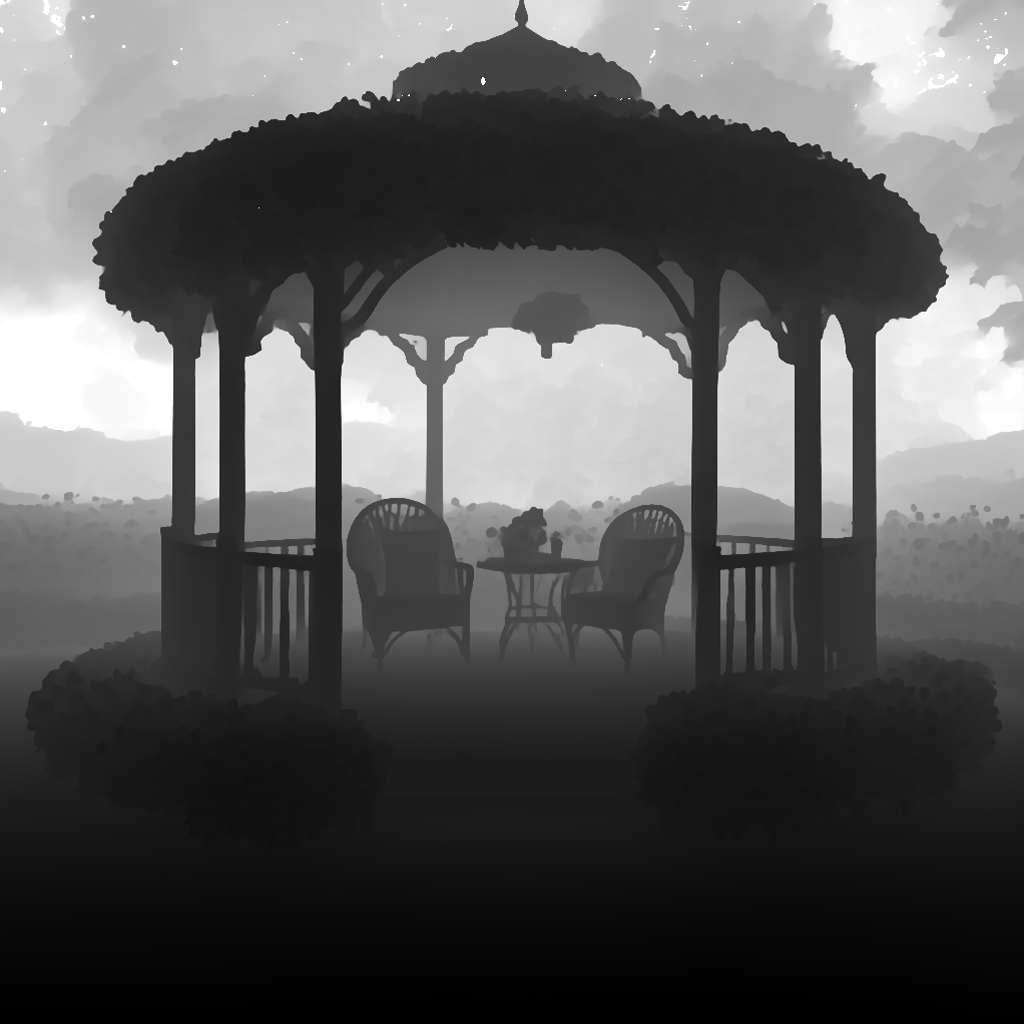

Estimate real-world depth in meters from a single image. Returns a 16-bit PNG depth map with metric scale metadata, ready for 3D reconstruction, parallax effects, robotics, or any application that needs actual distances (not just relative ordering).

Based on Depth Anything 3 (DA3METRIC-LARGE) by ByteDance. Apache 2.0 license.

Why this model?

| Feature | This model | Typical DA3 wrappers |

|---|---|---|

| Output precision | 16-bit PNG (65,535 levels) | 8-bit (256 levels) |

| Metric depth | Real distances in meters with decode formula | Relative depth only |

| Inference resolution | Up to 1120px (reduces ViT grid artifacts) | Default 504px (visible grid) |

| EXIF handling | Auto-normalizes orientation before inference | Often ignored (rotated outputs) |

| Raw float32 output | Optional NPZ with full-precision metric depth | Not available |

| Stable API contract | Versioned output schema (da3-metric/v1) |

Unversioned |

Quick start

Python

import replicate

output = replicate.run(

"wolfire/depth-anything-v3-metric-large",

input={"image": "https://example.com/photo.jpg"},

)

# Output includes metric metadata

print(f"Depth range: {output['depth_min_m']:.2f}m — {output['depth_max_m']:.2f}m")

print(f"Depth map URL: {output['depth_png']}")

JavaScript

import Replicate from "replicate";

const replicate = new Replicate();

const output = await replicate.run("wolfire/depth-anything-v3-metric-large", {

input: { image: "https://example.com/photo.jpg" },

});

console.log(`Depth range: ${output.depth_min_m}m — ${output.depth_max_m}m`);

console.log(`Depth map: ${output.depth_png}`);

cURL

curl -sS -X POST "https://api.replicate.com/v1/models/wolfire/depth-anything-v3-metric-large/predictions" \

-H "Authorization: Bearer $REPLICATE_API_TOKEN" \

-H "Content-Type: application/json" \

-d '{"input": {"image": "https://example.com/photo.jpg"}}'

Decoding the 16-bit depth map

The output depth_png is a 16-bit grayscale PNG. Convert pixel values back to meters using the scale_m_per_unit and offset_m fields from the output:

import numpy as np

from PIL import Image

arr = np.array(Image.open("depth.png"), dtype=np.uint16)

depth_meters = arr.astype(np.float32) * output["scale_m_per_unit"] + output["offset_m"]

The depth_min_m and depth_max_m fields give the 1st/99th percentile depth range used for encoding, so you know the effective metric bounds without decoding.

Inputs

| Parameter | Type | Default | Description |

|---|---|---|---|

image |

file/URL | (required) | Input image |

focal_length_px |

float | 0 |

Focal length in pixels. 0 = auto-estimate using 60-degree HFOV heuristic |

max_process_res |

int | 0 |

Cap inference resolution (0 = server default of 1120px). Higher = sharper depth but more VRAM |

return_raw_depth |

bool | false |

Include depth_npz with float32 metric depth (no quantization loss) |

include_base64 |

bool | true |

Include depth_png_base64 for inline transport (no extra download) |

Outputs

| Field | Description |

|---|---|

depth_png |

16-bit grayscale PNG depth map (hosted URL) |

image |

Alias of depth_png (for Replicate UI preview cards) |

depth_npz |

Float32 metric depth in NPZ format (when return_raw_depth=true) |

depth_png_base64 |

Base64-encoded 16-bit PNG (when include_base64=true) |

depth_min_m |

Near depth bound in meters (1st percentile) |

depth_max_m |

Far depth bound in meters (99th percentile) |

scale_m_per_unit |

Multiply u16 pixel value by this to get meters |

offset_m |

Add this after scaling to get meters |

focal_length_used |

Focal length used for metric conversion (pixels) |

process_res_used |

Actual inference resolution used |

contract_version |

Output schema version (da3-metric/v1) |

model_name |

DA3METRIC-LARGE |

model_ref |

HuggingFace model reference |

model_commit |

Pinned DA3 source commit for reproducibility |

Use cases

- 3D photo effects / parallax — generate depth-based parallax from a single photo

- Robotics & SLAM — metric depth for obstacle avoidance and mapping

- AR/VR content — depth-aware compositing and occlusion

- Visual effects — depth-of-field, fog, and atmospheric perspective

- Point cloud generation — combine with camera intrinsics for 3D reconstruction

License

Apache 2.0 — the underlying DA3 model and this wrapper are both Apache-licensed.

Citation

@article{depth_anything_3,

title={Depth Anything 3: Recovering the Visual Space from Any Views},

author={Lin, Haotong and Chen, Yilun and Liew, Jun Hao and Luo, Jiashi and others},

journal={arXiv preprint arXiv:2511.10647},

year={2025}

}