Readme

MVDream for Multi-View Image Generation

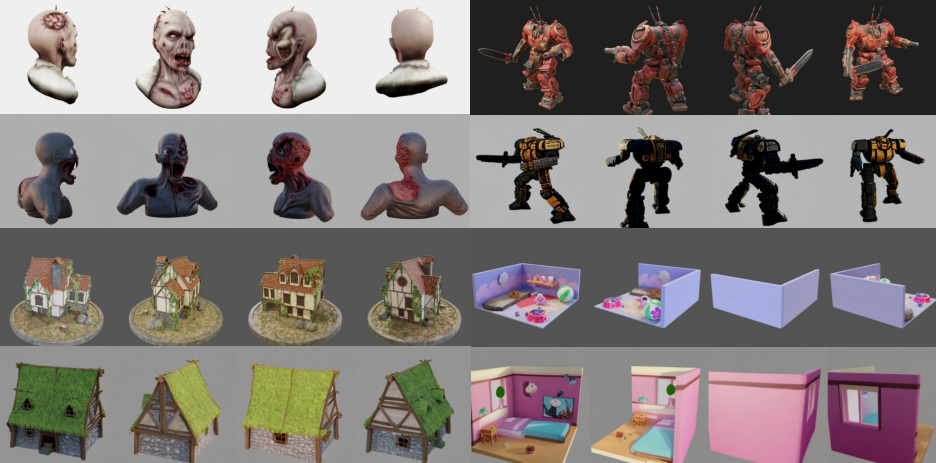

MVDream is a multi-view image generation model based on Stable Diffusion 2.1. It can generate images from text prompts and camera poses, making it possible to generate images from multiple views. Generated images can later be used to generate 3D assets. Cog-wrapper is adapted from the [official repository.] (https://github.com/bytedance/MVDream).

Abstract: We introduce MVDream, a multi-view diffusion model that is able to generate consistent multi-view images from a given text prompt. Learning from both 2D and 3D data, a multi-view diffusion model can achieve the generalizability of 2D diffusion models and the consistency of 3D renderings. We demonstrate that such a multi-view prior can serve as a generalizable 3D prior that is agnostic to 3D representations. It can be applied to 3D generation via Score Distillation Sampling, significantly enhancing the consistency and stability of existing 2D-lifting methods. It can also learn new concepts from a few 2D examples, akin to DreamBooth, but for 3D generation.

See the paper for more details.

API Usage

To use MVDream, simply describe the scene in natural language, and set stable diffusion generation parameters, camera elevation and/or azimuth angle span if you wish. The model will generate a consistent set of images from different views. API has the following inputs:

- prompt: What you want to generate expressed in natural language

- image_size: Width and height of the generated images. allowed values are 128, 256, 512, 1024. Note, larger is better, but slower.

- num_frames: Number of views to generate.

- num_inference_steps: Number of diffusion steps. Higher values will lead to better quality, but slower generation.

- guidance_scale: How much to guide the generation process with the prompt. Higher values will lead to generation that is closer to the prompt, but less diverse or maybe of lower quality.

- camera_elevation: Elevation angle of the camera.

- camera_azimuth: Azimuth angle of the camera in the first view.

- camera_azimuth_span: Total span of the azimuth angle. For example if the span is kept as 360 degrees and num_frames is set to 5 then in each view azimuth angle will be incremented by 360/5=72 degrees.

- seed: Random seed for the generation process. If not specified, a random seed will be used.

References

@article{shi2023MVDream,

author = {Shi, Yichun and Wang, Peng and Ye, Jianglong and Mai, Long and Li, Kejie and Yang, Xiao},

title = {MVDream: Multi-view Diffusion for 3D Generation},

journal = {arXiv:2308.16512},

year = {2023},

}