Run Stable Diffusion on your M1 Mac’s GPU

Stable Diffusion is open source, so anyone can run and modify it. That’s what has caused the abundance of creations over the past week.

You can run Stable Diffusion in the cloud on Replicate, but it’s also possible to run it locally. As well as generating predictions, you can hack on it, modify it, and build new things. Getting it working on an M1 Mac’s GPU is a little fiddly, so we’ve created this guide to show you how to do it.

All credit for this goes to everyone who contributed to this fork of stable-diffusion on GitHub and figured it all out in this GitHub thread. We’re merely messengers of their great work.

One thing we’ve done on top of previous work: use pip instead of Conda to install dependencies. It’s much easier to set up and shouldn’t need to compile anything because it uses binary wheels.

Prerequisites

- A Mac with an M1 or M2 chip.

- 16GB RAM or more. 8GB of RAM works, but it is extremely slow.

- macOS 12.3 or higher.

Set up Python

You need Python 3.10 to run Stable Diffusion. Run python -V to see what Python version you have installed:

$ python3 -V !11338 Python 3.10.6

If it’s 3.10 or above, like here, you’re good to go! Skip on over to the next step.

Otherwise, you’ll need to install Python 3.10. The easiest way to do that is with Homebrew. First, install Homebrew if you haven’t already.

Then, install the latest version of Python:

brew update brew install python

Now if you run python3 -V you should have 3.10 or above. You might need to reopen your console to make it work.

Clone the repository and install the dependencies

Run this to clone the fork of Stable Diffusion:

git clone -b apple-silicon-mps-support https://github.com/bfirsh/stable-diffusion.git cd stable-diffusion mkdir -p models/ldm/stable-diffusion-v1/

Then, set up a virtualenv to install the dependencies:

python3 -m pip install virtualenv python3 -m virtualenv venv

Activate the virtualenv:

source venv/bin/activate

(You’ll need to run this command again any time you want to run Stable Diffusion.)

Then, install the dependencies:

pip install -r requirements.txt

If you’re seeing errors like Failed building wheel for onnx you might need to install these packages:

brew install Cmake protobuf rust

Download the weights

Go to the Hugging Face repository, have a read and understand the license, then click “Access repository”.

Download sd-v1-4.ckpt (~4 GB) on that page and save it as models/ldm/stable-diffusion-v1/model.ckpt in the directory you created above.

Run it!

Now, you can run Stable Diffusion:

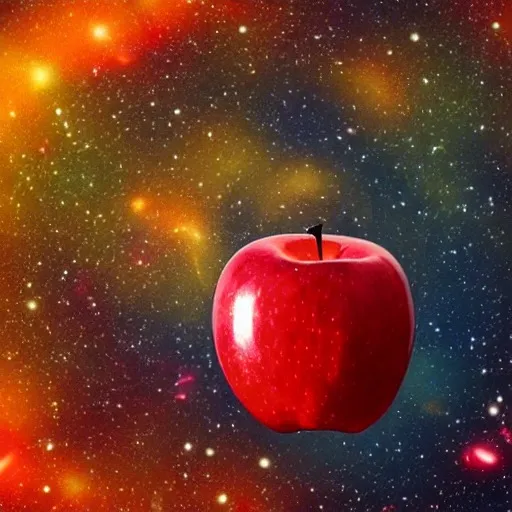

python scripts/txt2img.py

—prompt “a red juicy apple floating in outer space, like a planet”

—n_samples 1 —n_iter 1 —plms

Your output’s in outputs/txt2img-samples/. That’s it.

Next steps

- If you’re struggling to get this set up, ask in our Discord for some help.

- You can run Stable Diffusion with an API if you just want it running in the cloud.

- You might want to dig into the source code to see what you can modify. For inspiration, take a look at Deforum’s Colab notebook which can do a whole bunch of stuff, like image-to-image, interpolation, videos, and so on.

- You can push custom models to Replicate if you want to host your Stable Diffusion creations.

Happy hacking!

Want to read more like this and learn how to hack on Stable Diffusion? Follow us on Twitter.