How to make remarkable videos with Seedance 2.0

Run Seedance 2.0

AI video used to be utterly bad. (We’ve all seen Will Smith eat spaghetti more times than we can count, so I’ll spare you.)

Last year, however, we really began to see AI video take off with front-runners like Google’s Veo 3 series and Kling from Kuaishou. With each new model release, we inched toward improvements with prompt adherence, audio integration, and solving the “AI look.”

Seedance 2.0 is the largest step change we’ve seen in months. You can make movies with this thing.

A catastrophic collision between two massive space stations in low Earth orbit. Metal shears apart in slow motion as the stations grind into each other, sending a hailstorm of debris spiraling outward. Entire modules crumple like tin cans. Pressurized compartments blow out in violent bursts of crystallizing atmosphere. Solar panels shatter and cartwheel into the void. The camera tumbles through the wreckage as an astronaut ragdolls past, arms flailing. Explosions ripple down the station spine. Earth looms enormous in the background, serene and indifferent. Hyper-realistic, catastrophic scale, ISO debris field, 8k, Gravity collision sequence energy.

A daring aerial rogue diving on a bio-mechanical glider through a chaotic floating-island bazaar, weaving effortlessly through airborne merchants, dodging passing airships, flocking griffins, and tethered trading posts. He plummets past crumbling stone arches, busy rope bridges, and cascading waterfalls, barrel-rolling through narrow gaps with precision and style. Cinematic tracking shots follow his descent, enhanced by dynamic motion blur and ethereal dappled sunlight reflecting off crystal formations and mist. The sky-city pulses with an energetic fantasy vibe—flapping wings, shouting vendors, and nonstop vertical motion. Ultra-realistic detail with an epic high-fantasy action aesthetic, capturing speed, agility, and fearless momentum through the clouds.

A high-speed car chase on a rain-drenched highway at night. Two muscle cars weave through heavy traffic at 140mph, headlights slicing through the downpour. One car clips a semi-truck sending sparks showering across six lanes. The camera is mounted on the hood of the lead car, rain hammering the lens. Neon highway signs blur overhead. The pursuing car fishtails through a gap between two buses. Tires hydroplane on standing water. Hyper-realistic, motion blur, reflections on wet asphalt, 8k, Michael Mann cinematography.

A massive dinosaur stampede through a dense jungle. Dozens of brachiosaurus and parasaurolophus crash through the tree line, their enormous bodies snapping trunks like twigs. The camera is at ground level, shaking with each thundering footstep. Dust and debris fill the air. A flock of pterodactyls bursts from the canopy overhead. The stampede parts around a fallen tree, the camera narrowly avoiding being trampled. Hyper-realistic, jungle foliage flying everywhere, Jurassic Park energy, 8k, Spielberg cinematography.

A fighter jet launches from an aircraft carrier at sunset. The catapult fires and the jet accelerates from zero to 170mph in two seconds, afterburners blazing blue-white. Steam erupts from the catapult track. The camera follows from the deck as the jet clears the bow and drops slightly before climbing steeply into the orange sky, leaving twin contrails. Deck crew brace against the jet blast. The ocean stretches to the horizon. Hyper-realistic, Top Gun cinematography, 8k, the screaming roar of twin turbofan engines and the metallic slam of the catapult.

A lone explorer treks through an ancient overgrown temple deep in the jungle. Massive stone columns wrapped in vines tower overhead. Shafts of golden light pierce through gaps in the crumbling ceiling, illuminating floating dust and insects. The explorer pushes through a curtain of hanging roots and discovers a vast underground chamber with a still pool of water reflecting the ruins above. Fireflies drift through the space. Hyper-realistic, Indiana Jones atmosphere, 8k, epic discovery moment, dripping water echoing through the chamber.

A massive tidal wave crashes into a coastal city. Buildings crumble as the wall of water surges through the streets. Cars are swept up and tumble through the flood. The camera captures the destruction from a rooftop as the wave passes below, water exploding against skyscrapers. Debris and foam churn in every direction. The sky is dark with storm clouds. Hyper-realistic, catastrophic scale, 8k, Roland Emmerich disaster movie, the deafening roar of a million tons of water.

A dramatic horseback chase through a canyon at golden hour. A rider on a black stallion gallops at full speed along a narrow ledge, red dust billowing behind them. The canyon walls tower on both sides, glowing amber in the low sun. The horse leaps over a gap in the trail, all four hooves off the ground, mane and tail streaming. The camera tracks alongside from a parallel ridge. Rocks crumble from the ledge edge. The rider looks back over their shoulder. Hyper-realistic, epic Western cinematography, 8k, thundering hooves echoing off canyon walls.

It’s quite a revolutionary video model.

This post is going to discuss some of the practical and the coolest capabilities of Seedance 2.0 so that you will understand how to hold this incredible piece of technology. After reading through, you’ll have all the tricks that can help you generate some actually wonderful video.

Reference anything

Most video models take a text prompt and give you a clip. Seedance 2.0 works differently. You can feed it up to 9 images, 3 video clips, 3 audio files, and a text prompt. The model understands how to use each piece. You can pull the composition from a photo, the camera movement from a video clip, the rhythm from an audio track, and describe how it all works together in words.

The process is something closer to directing than prompting.

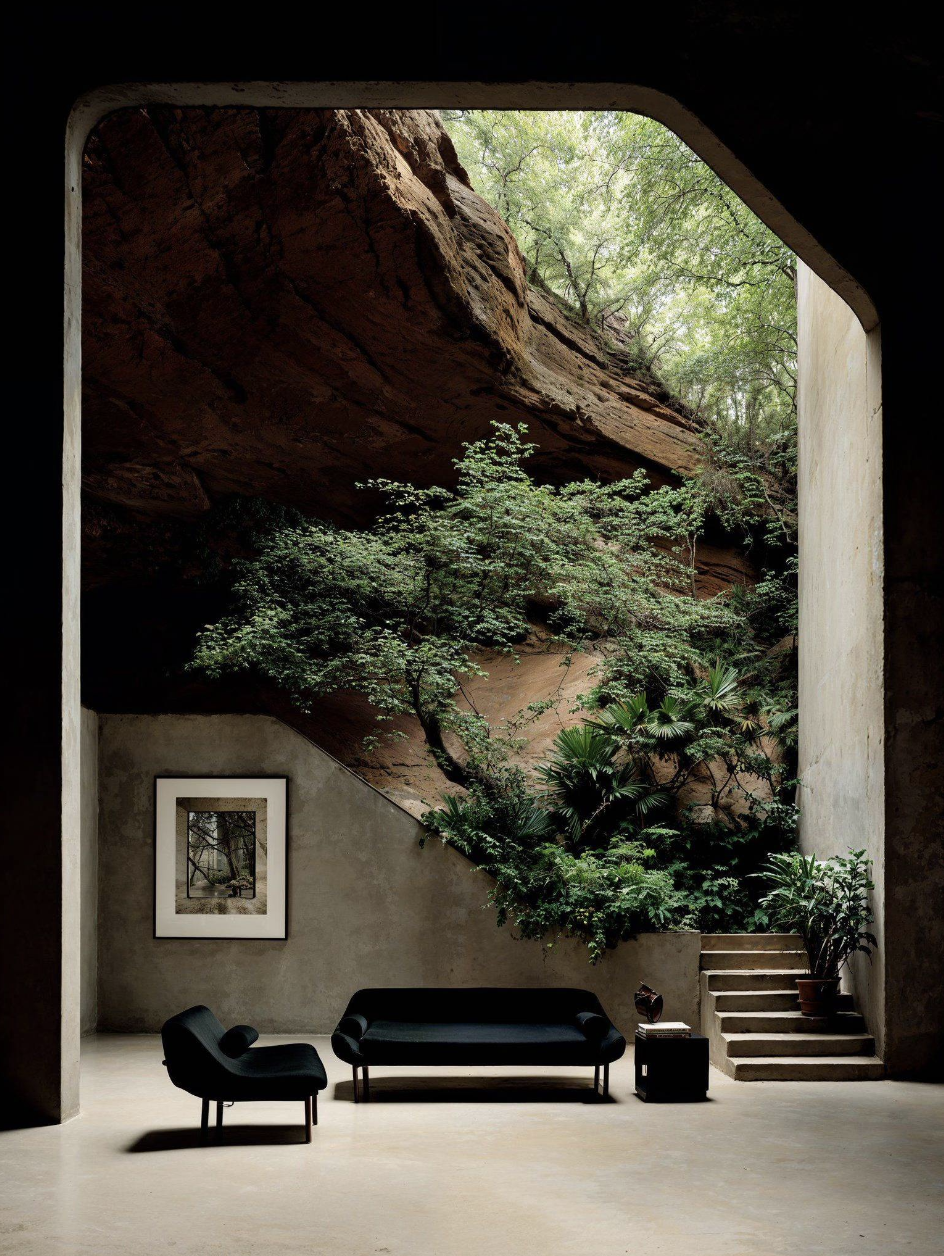

Here’s an example. Let’s place this character in this interior:

And let’s make him speak this audio (from resemble-ai/chatterbox-turbo):

To reference any input assets (images, video, or audio), we refer to each as [Image1] or [Audio1] in our prompt. For example:

[Image2] is in the interior of [Image1] where he is kept the style of [Image2], but the realism of [Image1] remains. He says [Audio1].

Couple tips with this trick — it helps if you type out your input audio in the prompt itself and also if you set the video duration to the same length as your audio.

With the ability to reference, you can keep stylistic consistency in your videos. Take a look at this example where I fed in a couple of images of a particular style and asked Seedance 2.0 to morph them into one video. This could have taken days for a video editor to do.

Create fluid morphs between all of the photos

Didn’t even have to ask for the background music, but it works well for me!

You can see how references in Seedance 2.0 extend to a couple common workflows:

- Character consistency: Provide reference images of a character to maintain their appearance across generations

- Motion transfer: Upload a video clip and the model reproduces its movement patterns in a new context

- Style and composition: Use an image as a visual reference for framing, color palette, or artistic style

- Audio-driven rhythm: Supply a music track and the model syncs cuts and movement to the beat

Audio from the same engine

Seedance 2.0 doesn’t generate video and then dub audio on top. Audio and video come from the same unified architecture, which means they’re synchronized at the millisecond level.

The model produces dual-channel stereo with multiple layered tracks. This means you can get a mix of background music, ambient sound effects, and character voiceover.

A close-up of a jazz pianist’s hands flying across the keys of a grand piano in a smoky nightclub. Each keystroke produces a visible ripple of warm amber light across the piano’s lacquered surface. The camera slowly pulls back to reveal the full band — upright bass, drums with brushes, a tenor saxophone. The musicians nod to each other, trading solos. Cigarette smoke curls through a single spotlight beam. Hyper-realistic, intimate jazz club atmosphere, 8k, the crisp attack of piano keys, walking bassline, brushed snare, breathy saxophone melody.

Every instrument is individually audible and synced to the musician’s movements. The piano keys, the walking bassline, the brushed snare — all generated together with the visuals, not layered on after.

The same applies to dialogue. Every word lands crisp and clean, fixed precisely to lip movements. And generally, it’s pretty easy to one-shot even long and complex dialogue.

A tight medium shot of two eccentric adults in typical, everyday clothing—one in a slightly oversized trench coat, the other in a weathered denim jacket—deep in a heated, animated conversation on a rainy West Village street corner. The one in the trench coat gestures wildly, the words “SOURDOUGH PRETZEL” appearing in pulsating electric blue: “It’s not just a pretzel, Arthur! It’s a sourdough pretzel!” The second guy says ‘Who cares. A pretzel’s a pretzel!’

Dealing with physics

What I love most about this model is its ability to handle intricate physics. This was a huge problem with prior video models. Any complex motion or interaction would be riddled with artifacts. With Seedance 2.0, stuff just works. Even crazy stuff.

I’m obsessed with creating high-octane space videos on this model just given how incredible and realistic these crashes can look.

A catastrophic collision between two massive space stations in low Earth orbit. Metal shears apart in slow motion as the stations grind into each other, sending a hailstorm of debris spiraling outward. Entire modules crumple like tin cans. Pressurized compartments blow out in violent bursts of crystallizing atmosphere. Solar panels shatter and cartwheel into the void. The camera tumbles through the wreckage as an isolated astronaut in a white EVA suit ragdolls past, arms flailing helplessly. Explosions ripple down the station’s spine. Earth looms enormous in the background, serene and indifferent. Hyper-realistic, catastrophic scale, orbital debris field, 8k, Gravity collision sequence energy.

Take a look at this example. We started with an input image for this one and just asked Seedance 2.0 to animate the scene. Typical video models would just have the vehicle move forward as a rigid body, but we see that Seedance 2.0 takes the extra step to have the vehicle bob as it navigates the rough terrain. This is more the quality we would expect to see from a high-budget film.

Animate this image

The same physical understanding carries through to stylized output. Here, we started with an input image from Dreamina 3.1, also from Bytedance. Even rendered as an oil painting, fluid dynamics stay accurate — water moves with the right viscosity, splashes break apart correctly, surfaces behave like surfaces.

Animate this image

Multi-shot output with camera planning

Seedance 2.0 generates up to 15 seconds of video with multi-shot compositions. The model plans camera language from your prompt — cuts, transitions, tracking shots, push-ins — without you having to specify every camera move.

Time-coded multi-shot prompting

You can direct individual shots within a single 15-second generation by writing timestamps into the prompt.

Example Format:

[0-4s]: wide establishing shot, static camera, misty bamboo forest at dawn

[4-9s]: medium shot, slow push-in, the fighter steps forward

[9-15s]: close-up, orbit shot, the fighter strikes, slow motionYou could even just list out the scenes you want, but this time-coded approach allows you to really dial in the length of clips. What’s crazy is that it doesn’t hallucinate, even with this amount of dense and specific information in your prompt.

Each shot should specify camera position, subject action, and lighting state. Transition language between shots (“hard cut to,” “seamless morph into”) gives the model explicit cut instructions rather than letting it improvise.

Here are four examples that show what time-coded prompting can do:

Samurai at sunset — dolly zoom and crane shot:

[0-4s]: Low-angle wide shot from ground level, static, a lone samurai silhouetted against a blood-red sunset on a windswept ridge, tall grass bending in the wind, the distant rumble of approaching thunder.

[4-8s]: Dolly zoom on the samurai’s face as realization hits — the background stretches and warps while the subject stays locked in frame, a Hitchcock vertigo effect, drums building.

[8-12s]: Whip pan to a sweeping crane shot rising above the ridge, revealing an army of a thousand torches advancing through the valley below, war horns blaring, smoke drifting across the landscape. [12-15s]: Snap cut to extreme close-up, the samurai’s hand grips the katana hilt, knuckles white, a single drop of sweat falls in slow motion, the sound of a blade being drawn rings out, then dead silence. Hyper-realistic, 8k, Akira Kurosawa cinematography, Hans Zimmer sound design.

Perfume commercial — product videos, UGC, etc.:

(0-3s) Macro shot of a luxury perfume bottle among scattered pink peonies, shallow depth of field, petals floating in warm afternoon light, soft ambient music.

(3-7s) Camera glides closer, a feminine hand enters frame from the right, fingers gently touch the glass bottle, the sound of silk rustling.

(7-12s) Hard cut to slow-motion spray, golden mist diffuses through the air, particles catching rim light against a dark background, the hiss of the atomizer.

(12-15s) Seamless pull-out to hero frame, product centered, volumetric lighting, minimal cream background, elegant silence. Hyper-realistic, 8k, fashion commercial cinematography.

Mars landing — Interstellar-style isolation:

[0-4s]: Wide shot, static tripod, a lone astronaut stands on a red Martian plain at dusk, dust swirling at their boots, the low hum of wind across the desert.

[4-8s]: Slow push-in to medium shot, the astronaut kneels and places a gloved hand on the soil, visor reflecting the sunset, breathing audible inside the helmet.

[8-12s]: Hard cut to close-up of the gloved hand lifting a handful of red dust, grains falling in low gravity slow motion, each particle catching the fading light.

[12-15s]: Extreme close-up on the visor reflection showing Earth as a tiny blue dot, static hold, a single heartbeat, then silence. Hyper-realistic, 8k, Gravity cinematography, Interstellar sound design.

Neon Tokyo — Blade Runner rain sequence:

[0-4s]: Wide establishing shot, static camera, a neon-drenched Tokyo alley at night, rain pouring, reflections pooling on wet asphalt, the distant murmur of city traffic and rain hitting metal awnings.

[4-8s]: Medium shot, slow dolly forward, a figure in a black trench coat walks toward camera under a red paper umbrella, neon signs flickering on their face.

[8-12s]: Seamless morph into close-up tracking shot, the figure’s hand drops the umbrella, rain hits their face, they look up at the sky, the sound of rain intensifies.

[12-15s]: Hard cut to extreme close-up of raindrops hitting a neon puddle in slow motion, each drop exploding into rings of reflected color, bass rumble fading to silence. Hyper-realistic, 8k, Blade Runner 2049 cinematography, Roger Deakins lighting.

All four follow the same escalation pattern from filmmaking: wide > medium > close-up > extreme close-up. That progression maps naturally onto a 15-second window and gives the model clear structure to work with.

Getting started with the API

Here’s how to generate a video with Seedance 2.0:

import replicate

output = replicate.run(

"bytedance/seedance-2.0",

input={

"prompt": "A fighter jet launches from an aircraft carrier at sunset. The catapult fires and the jet accelerates, afterburners blazing. Steam erupts from the catapult track. The camera follows from the deck as the jet clears the bow and climbs steeply into the orange sky. Hyper-realistic, Top Gun cinematography, 8k.",

"duration": 10,

"resolution": "720p",

"aspect_ratio": "16:9",

"generate_audio": True,

# "reference_images": ["https://..."] for character/style reference

# "reference_videos": ["https://..."] for motion transfer

# "reference_audios": ["https://..."] for audio-driven generation

}

)

print(output)import Replicate from "replicate";

const replicate = new Replicate();

const output = await replicate.run(

"bytedance/seedance-2.0",

{

input: {

prompt: "A fighter jet launches from an aircraft carrier at sunset. The catapult fires and the jet accelerates, afterburners blazing. Steam erupts from the catapult track. The camera follows from the deck as the jet clears the bow and climbs steeply into the orange sky. Hyper-realistic, Top Gun cinematography, 8k.",

duration: 10,

resolution: "720p",

aspect_ratio: "16:9",

generate_audio: true,

}

}

);

console.log(output);Prompting tips

Here are my final prompting tips to juice the most out of this model:

-

Overdescribe. Pack tons of detail into your prompt. Don’t write “a car chase” — write “a high-speed night pursuit through rain-slicked Tokyo streets, neon reflections streaking across wet asphalt, headlights cutting through mist.”

-

Describe the audio, not just the visuals. Since the model generates audio natively, talking about sound in your prompt will get exactly what you want. “The screaming roar of twin turbofan engines and the metallic slam of the catapult” gives the model clear audio direction.

-

Use “hyper-realistic, 8k” as quality anchors. These terms push the model toward its highest fidelity output.

-

Describe the camera, not just the subject. “The camera is mounted on the hood of the lead car,” “swift dolly zoom,” “the camera is at ground level” — these ground the shot and produce more convincing results.

-

Combine reference types for maximum control. Use an image for character appearance, a video clip for motion style, and an audio track for rhythm.

Start creating with Seedance 2.0