Readme

Tianheng Cheng2,3,,

Lin Song1,📧,,

Yixiao Ge1,🌟,2,

Wenyu Liu3,

Xinggang Wang3,📧,

Ying Shan1,2

* Equal contribution 🌟 Project lead 📧 Corresponding author

1 Tencent AI Lab, 2 ARC Lab, Tencent PCG

3 Huazhong University of Science and Technology

Updates

🔥[2024-2-10]: We provide the fine-tuning and data details for fine-tuning YOLO-World on the COCO dataset or the custom datasets!

[2024-2-3]: We support the Gradio demo now in the repo and you can build the YOLO-World demo on your own device!

[2024-2-1]: We’ve released the code and weights of YOLO-World now!

[2024-2-1]: We deploy the YOLO-World demo on HuggingFace 🤗, you can try it now!

[2024-1-31]: We are excited to launch YOLO-World, a cutting-edge real-time open-vocabulary object detector.

Highlights

This repo contains the PyTorch implementation, pre-trained weights, and pre-training/fine-tuning code for YOLO-World.

-

YOLO-World is pre-trained on large-scale datasets, including detection, grounding, and image-text datasets.

-

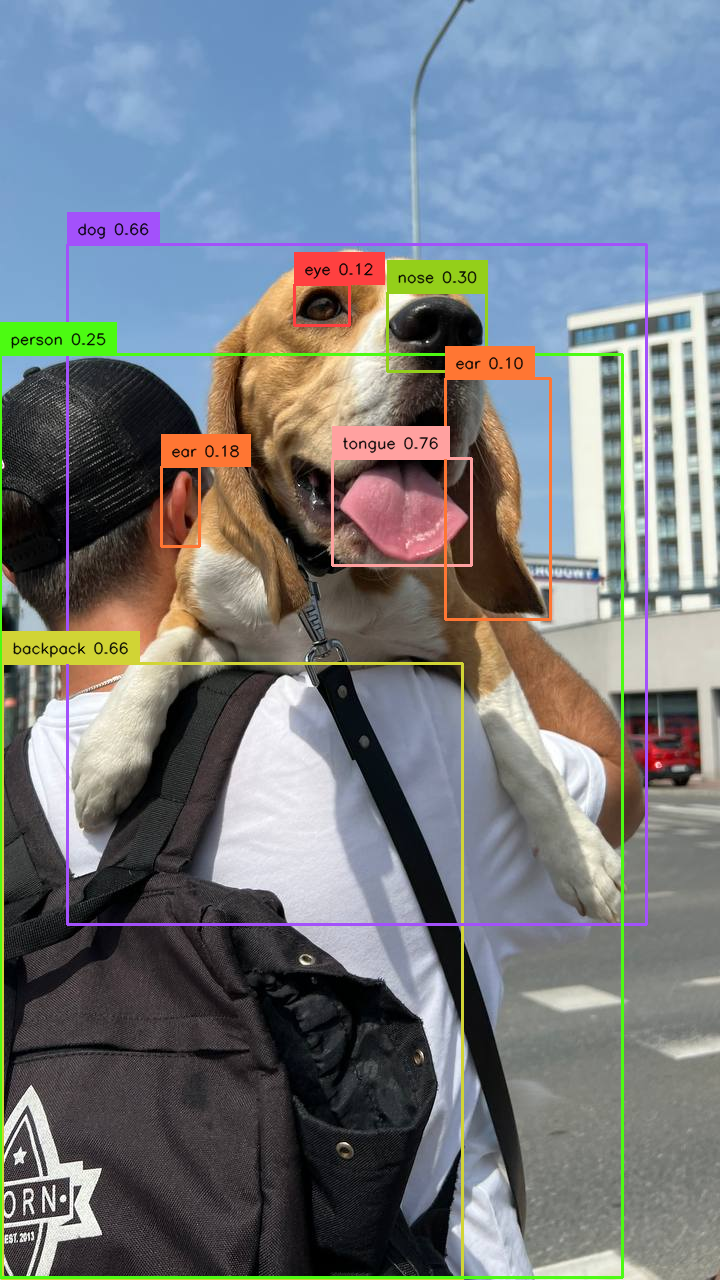

YOLO-World is the next-generation YOLO detector, with a strong open-vocabulary detection capability and grounding ability.

-

YOLO-World presents a prompt-then-detect paradigm for efficient user-vocabulary inference, which re-parameterizes vocabulary embeddings as parameters into the model and achieve superior inference speed. You can try to export your own detection model without extra training or fine-tuning in our online demo!

</center>

</center>

Abstract

The You Only Look Once (YOLO) series of detectors have established themselves as efficient and practical tools. However, their reliance on predefined and trained object categories limits their applicability in open scenarios. Addressing this limitation, we introduce YOLO-World, an innovative approach that enhances YOLO with open-vocabulary detection capabilities through vision-language modeling and pre-training on large-scale datasets. Specifically, we propose a new Re-parameterizable Vision-Language Path Aggregation Network (RepVL-PAN) and region-text contrastive loss to facilitate the interaction between visual and linguistic information. Our method excels in detecting a wide range of objects in a zero-shot manner with high efficiency. On the challenging LVIS dataset, YOLO-World achieves 35.4 AP with 52.0 FPS on V100, which outperforms many state-of-the-art methods in terms of both accuracy and speed. Furthermore, the fine-tuned YOLO-World achieves remarkable performance on several downstream tasks, including object detection and open-vocabulary instance segmentation.

Main Results

We’ve pre-trained YOLO-World-S/M/L from scratch and evaluate on the LVIS val-1.0 and LVIS minival. We provide the pre-trained model weights and training logs for applications/research or re-producing the results.

Zero-shot Inference on LVIS dataset

| model | Pre-train Data | APfixed | APmini | APr | APc | APf | APval | APr | APc | APf | weights |

|---|---|---|---|---|---|---|---|---|---|---|---|

| YOLO-World-S | O365+GoldG | 26.2 | 24.3 | 16.6 | 22.1 | 27.7 | 17.8 | 11.0 | 14.8 | 24.0 | HF Checkpoints 🤗 |

| YOLO-World-M | O365+GoldG | 31.0 | 28.6 | 19.7 | 26.6 | 31.9 | 22.3 | 16.2 | 19.0 | 28.7 | HF Checkpoints 🤗 |

| YOLO-World-L | O365+GoldG | 35.0 | 32.5 | 22.3 | 30.6 | 36.1 | 24.8 | 17.8 | 22.4 | 32.5 | HF Checkpoints 🤗 |

NOTE:

1. The evaluation results of APfixed are tested on LVIS minival with fixed AP.

2. The evaluation results of APmini are tested on LVIS minival.

3. The evaluation results of APval are tested on LVIS val 1.0.

Getting started

1. Installation

YOLO-World is developed based on torch==1.11.0 mmyolo==0.6.0 and mmdetection==3.0.0.

python setup.py build develop

2. Preparing Data

We provide the details about the pre-training data in docs/data.

Training & Evaluation

We adopt the default training or evaluation scripts of mmyolo.

We provide the configs for pre-training and fine-tuning in configs/pretrain and configs/finetune_coco.

Training YOLO-World is easy:

chmod +x tools/dist_train.sh

# sample command for pre-training, use AMP for mixed-precision training

https://github.com/AILab-CVC/YOLO-World/blob/master/tools/dist_train.sh configs/pretrain/yolo_world_l_t2i_bn_2e-4_100e_4x8gpus_obj365v1_goldg_train_lvis_minival.py 8 --amp

NOTE: YOLO-World is pre-trained on 4 nodes with 8 GPUs per node (32 GPUs in total). For pre-training, the node_rank and nnodes for multi-node training should be specified.

Evaluating YOLO-World is also easy:

chmod +x tools/dist_test.sh

https://github.com/AILab-CVC/YOLO-World/blob/master/tools/dist_test.sh path/to/config path/to/weights 8

NOTE: We mainly evaluate the performance on LVIS-minival for pre-training.

Fine-tuning YOLO-World

We provide the details about fine-tuning YOLO-World in docs/fine-tuning.

Deployment

We provide the details about deployment for downstream applications in docs/deployment. You can directly download the ONNX model through the online demo in Huggingface Spaces 🤗.

Demo

We provide the Gradio demo for local devices:

python demo.py path/to/config path/to/weights

Acknowledgement

We sincerely thank mmyolo, mmdetection, GLIP, and transformers for providing their wonderful code to the community!

Citations

If you find YOLO-World is useful in your research or applications, please consider giving us a star 🌟 and citing it.

@article{cheng2024yolow,

title={YOLO-World: Real-Time Open-Vocabulary Object Detection},

author={Cheng, Tianheng and Song, Lin and Ge, Yixiao and Liu, Wenyu and Wang, Xinggang and Shan, Ying},

journal={arXiv preprint arXiv:2401.17270},

year={2024}

}

Licence

YOLO-World is under the GPL-v3 Licence and is supported for comercial usage.