Generate music

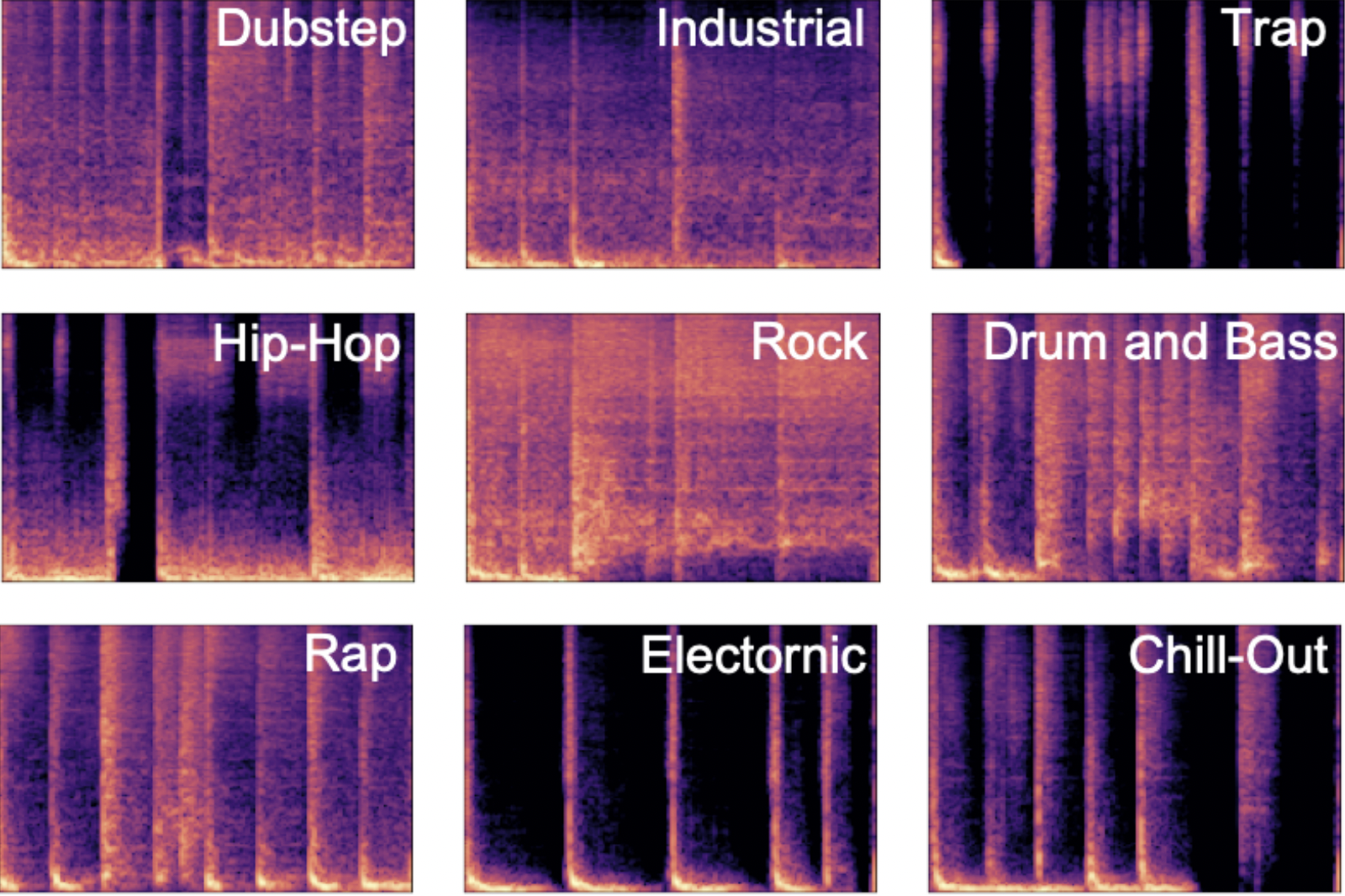

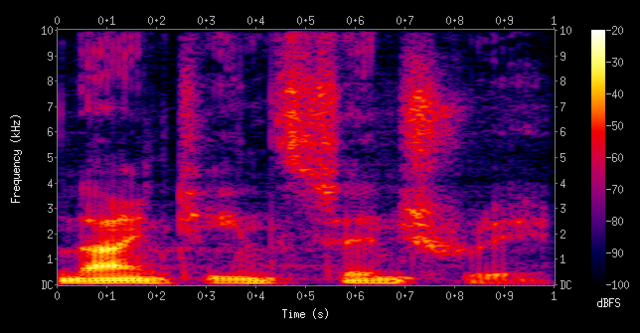

These models generate and modify music from text prompts and raw audio. They combine large language models and diffusion models trained on text-music pairs to understand musical concepts.

Key capabilities:

- Music generation: Create original music compositions and continuations based on text prompts. Generate realistic music matching a description.

- Audio super-resolution: Increase sample rates and add high frequency detail to improve the fidelity of generated or existing audio.

- Controllable generation: Specify parameters like chords, instruments, tempo, and style to control the generated music.

Our Pick: Riffusion

For most users, we recommend Riffusion as the best general-purpose music generation model. It generates high-quality music based on text prompts in real-time, usually in around 10 seconds.

Riffusion uses a latent diffusion model to generate a mel spectrogram (an audio representation) which is then converted into realistic audio. This allows it to create music matching a description extremely quickly.

To get the most out of generated audio, we also recommend running it through an audio super-resolution model like nateraw/audio-super-resolution. This will increase the sample rate and improve the overall fidelity in about 45 seconds.

Also Great: MusicGen

If you want more control over your generations, the MusicGen family of models are great options. In particular, we recommend:

- musicgen-remixer for remixing an existing song into a new style

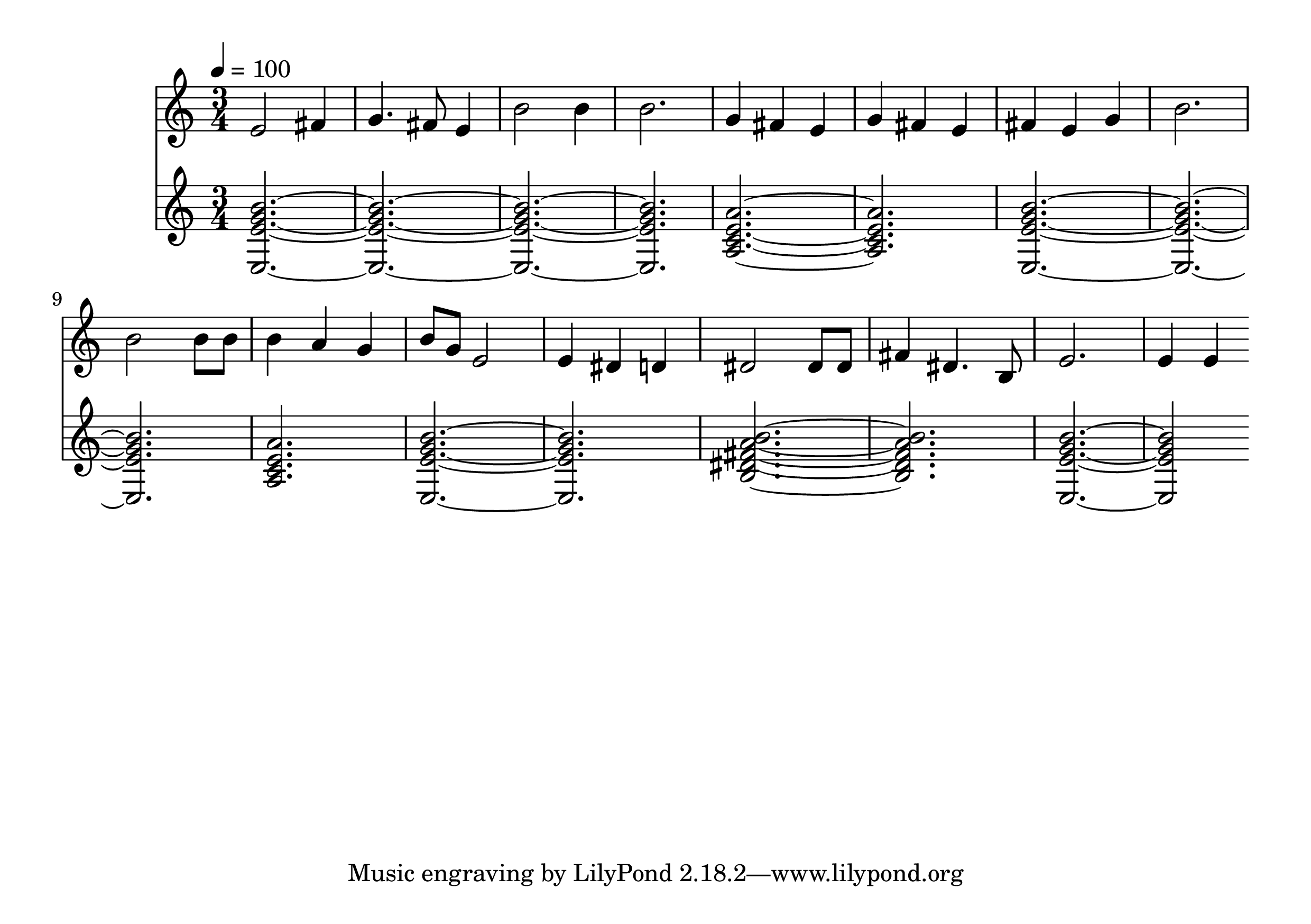

- musicgen-chord for specifying exact chords and tempo

- musicgen-stereo-chord for stereo output with chords and tempo control

These models give you much more fine-grained control, at the cost of longer generation times (3-5 minutes). They’re great for musicians and composers who want to dial in specific parameters.

Other Alternatives

A few other models enable interesting niche capabilities:

- EMOPIA generates music conditioned on a desired emotion

- Mustango provides extra control tags for audio quality, duration, etc.

- Cantable Diffuguesion generates and harmonizes Bach chorales

Recommended models

meta/musicgen

Generate music from a prompt or melody

riffusion/riffusion

Stable diffusion for real-time music generation

allenhung1025/looptest

Four-bar drum loop generation

nateraw/audio-super-resolution

AudioSR: Versatile Audio Super-resolution at Scale

haoheliu/audio-ldm

Text-to-audio generation with latent diffusion models

andreasjansson/music-inpainting-bert

Music inpainting of melody and chords

sakemin/musicgen-remixer

Remix the music into another styles with MusicGen Chord

andreasjansson/cantable-diffuguesion

Bach chorale generation and harmonization

harmonai/dance-diffusion

Tools to train a generative model on arbitrary audio samples

fofr/musicgen-choral

MusicGen fine-tuned on chamber choir music

declare-lab/mustango

Controllable Text-to-Music Generation

annahung31/emopia

Emotional conditioned music generation using transformer-based model.

sakemin/musicgen-stereo-chord

Generate music in stereo, restricted to chord sequences and tempo

sakemin/musicgen-chord

Generate music restricted to chord sequences and tempo

nateraw/musicgen-songstarter-v0.2

A large, stereo MusicGen that acts as a useful tool for music producers

lucataco/magnet

MAGNeT: Masked Audio Generation using a Single Non-Autoregressive Transformer

fofr/musicgen-epic

MusicGen fine-tuned on an epic orchestral style