Readme

GANs N’ Roses Pytorch

Abstract:

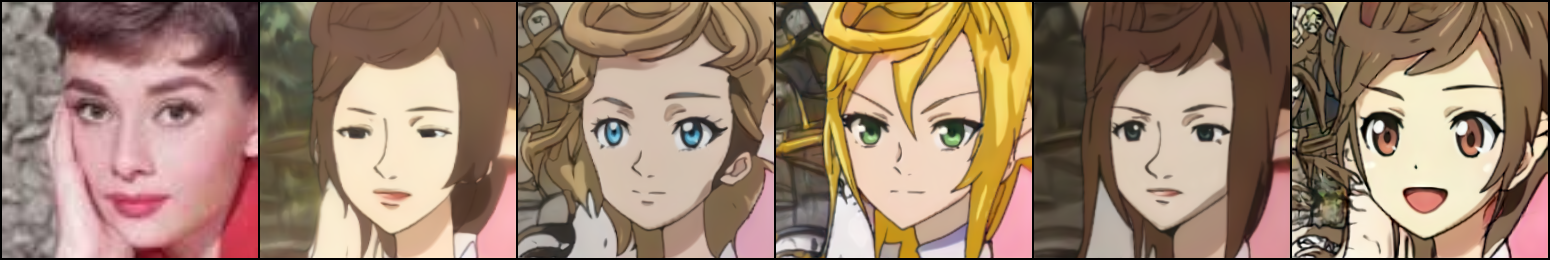

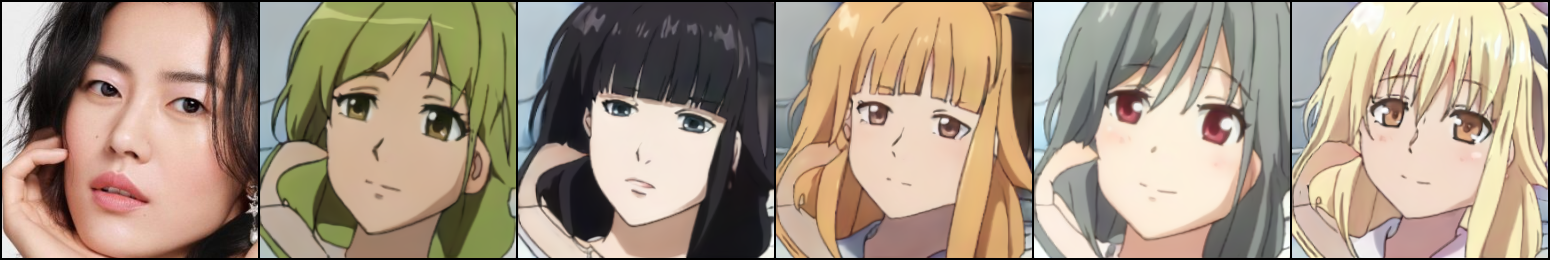

We show how to learn a map that takes a content code, derived from a face image, and a randomly chosen style code to an anime image. We derive an adversarial loss from our simple and effective definitions of style and content. This adversarial loss guarantees the map is diverse – a very wide range of anime can be produced from a single content code. Under plausible assumptions, the map is not just diverse, but also correctly represents the probability of an anime, conditioned on an input face. In contrast, current multimodal generation procedures cannot capture the complex styles that appear in anime. Extensive quantitative experiments support the idea the map is correct. Extensive qualitative results show that the method can generate a much more diverse range of styles than SOTA comparisons. Finally, we show that our formalization of content and style allows us to perform video to video translation without ever training on videos.

Usage

To generate stylized anime outputs, input an image or video file to the model.

Citation

If you use this code or ideas from our paper, please cite our paper:

@misc{chong2021gans,

title={GANs N' Roses: Stable, Controllable, Diverse Image to Image Translation (works for videos too!)},

author={Min Jin Chong and David Forsyth},

year={2021},

eprint={2106.06561},

archivePrefix={arXiv},

primaryClass={cs.CV}

}

Acknowledgments

This code borrows heavily from StyleGAN2 by rosalinity and partly from UGATIT.