Readme

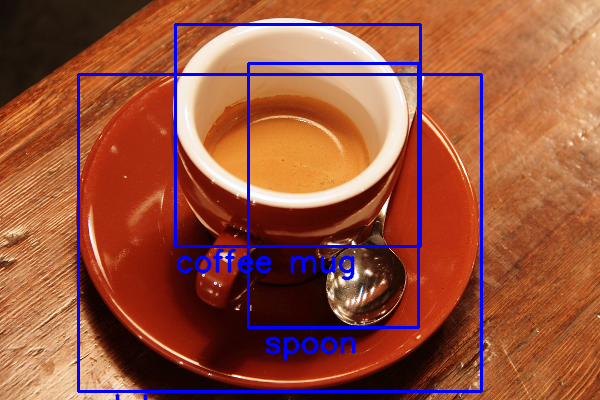

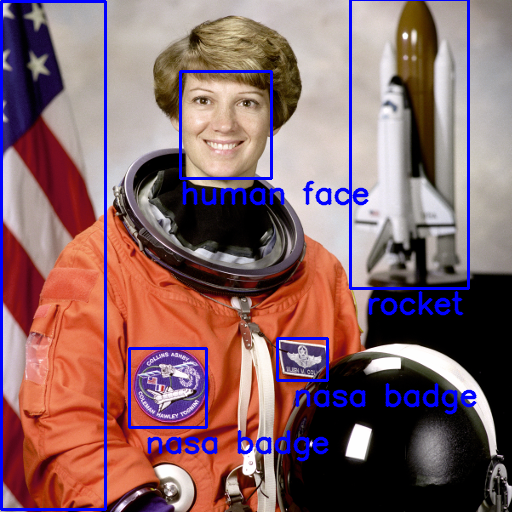

OWL-ViT

OWL-ViT uses CLIP and vision transformers backbones to enable open-vocabulary object detection. See the paper, original repository and Hugging Face implementation for details.

Using the API

You can use OWL-ViT to query images with text descriptions of any object. To use it, simply upload an image and enter comma separated text descriptions of objects you want to query the image for. You can also use the score threshold slider to set a threshold to filter out low probability predictions.

OWL-ViT is trained on text templates, hence you can get better predictions by querying the image with text templates used in training the original model: “photo of a star-spangled banner”, “image of a shoe”. Refer to the CLIP paper to see the full list of text templates used to augment the training data.

References

@article{minderer2022simple,

title={Simple Open-Vocabulary Object Detection with Vision Transformers},

author={Matthias Minderer, Alexey Gritsenko, Austin Stone, Maxim Neumann, Dirk Weissenborn, Alexey Dosovitskiy, Aravindh Mahendran, Anurag Arnab, Mostafa Dehghani, Zhuoran Shen, Xiao Wang, Xiaohua Zhai, Thomas Kipf, Neil Houlsby},

journal={ECCV},

year={2022},

}