Collections

Generate images

Use AI to generate images & photos with an API

Caption videos

Use AI to understand, describe, and caption videos with an API

Generate speech

Use AI for text-to-speech or to clone your voice via API

Generate images from a face

Use AI to generate images from a face with an API

Generate videos

Use AI to generate videos with an API

Upscale images with super resolution

Use AI to upscale and enhance images with an API

Generate music

Use AI to generate music with an API

Edit any image

Use AI to edit any image via API

Transcribe speech to text

Use AI to transcribe speech to text with an API

OCR to extract text from images

Use AI For Optical Character Recognition (OCR) to extract text from images via API

Remove backgrounds

Use AI to remove backgrounds from images and videos with an API

FLUX family of models

FLUX AI models by Black Forest Labs: image generation & editing via API

Restore images

Use AI to restore images via API

Enhance videos

Use AI to upscale, restore, extend, and enhance videos with an API

Detect NSFW content

Detect NSFW content in images and text

Classify text

Classify text by sentiment, topic, intent, or safety

Speaker diarization

Identify speakers from audio and video inputs

Create realistic face swaps

Replace faces across images with natural-looking results.

Turn sketches into images

Transform rough sketches into polished visuals

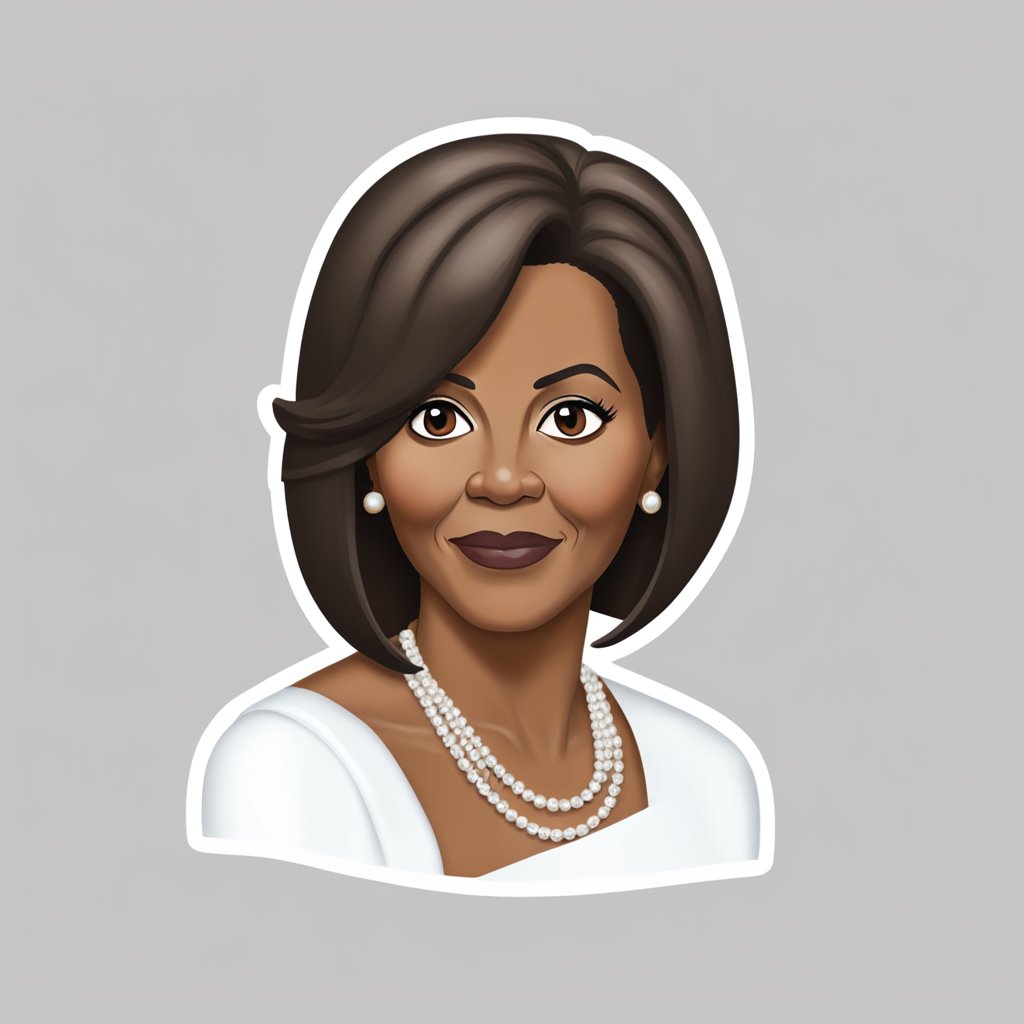

Generate emojis

Generate custom emojis from text or images

Generate anime-style images and videos

Create anime-style characters, scenes, and animations

Generate videos from images

Use AI to generate videos from images with an API

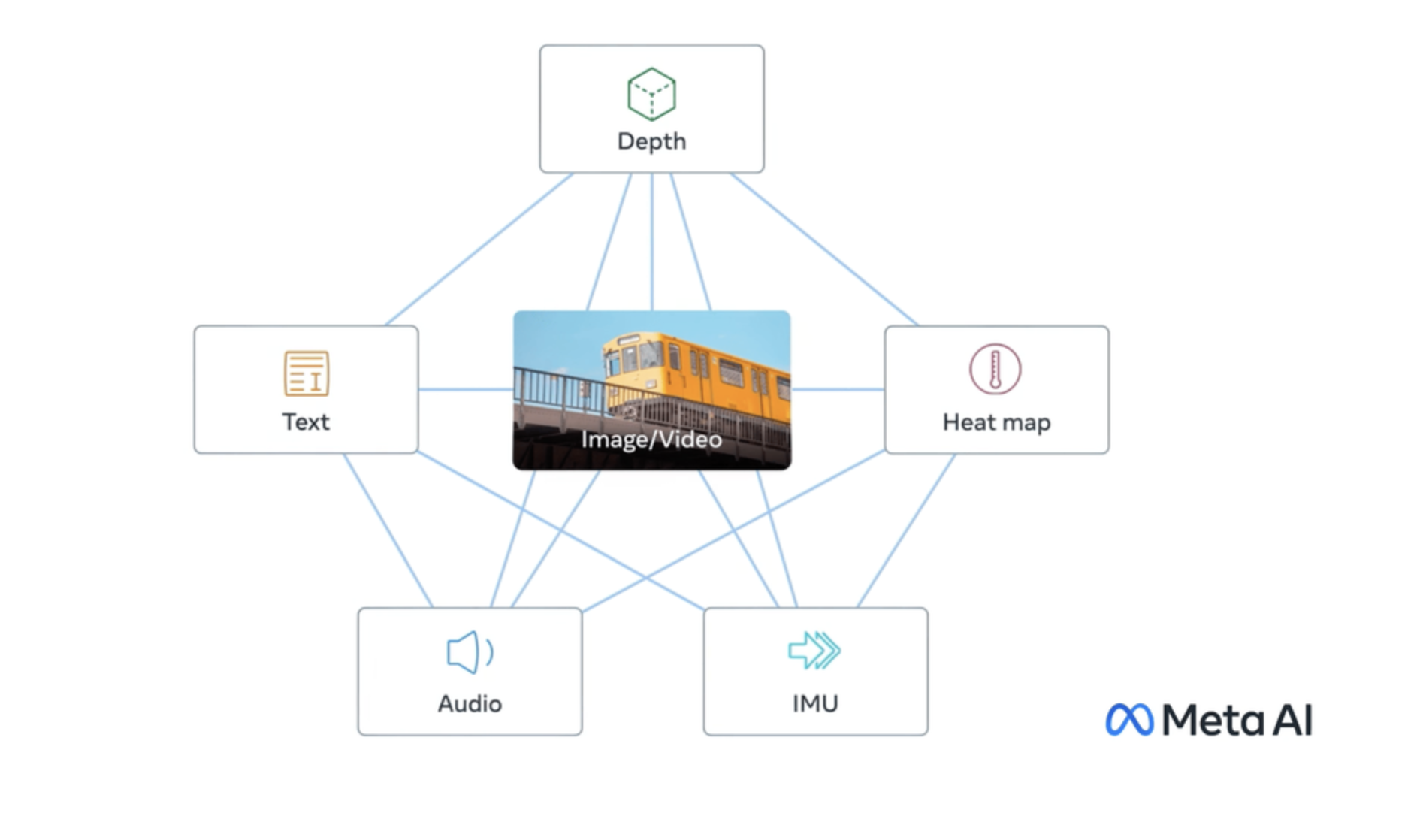

Vision models

Chat with images — visual Q&A, analysis, and reasoning via API

Caption Images

Use AI to generate captions and descriptions from images with an API

Edit your videos

Use AI to edit, restyle, extend, and remix videos with an API

WAN family of models

WAN family of models: open-source video, image, and audio generation

Create 3D content

Generate 3D objects, meshes, and textures from text or images with an API

Official models

Official models are always on, predictably priced, and have a stable API.

Large Language Models (LLMs)

Explore Large Language Models (LLMs) for chat, generation & NLP tasks via API

Try AI models for free

Try AI Models for free: video generation, image generation, upscaling, and photo restoration

Lipsync videos

Use AI to generate lipsync videos with an API

Control image generation

Use AI to control image generation with an API

Embedding models

Embedding models for AI search and analysis

Object detection and segmentation

Use AI object detection and segmentation models to distinguish objects in images & videos

Flux fine-tunes

Flux fine-tunes: build and run custom AI image models via API

Kontext fine-tunes

Kontext fine-tunes: Build custom AI image models with an API

Create songs with voice cloning

Create songs with voice cloning models via API

Media utilities

AI media utilities: auto-caption, watermark, frame extraction & more via API

Qwen-Image fine-tunes

Browse the diverse range of qwen-image fine-tunes the community has custom-trained on Replicate.