Explore

Fine-tune FLUX fast

Customize FLUX.1 [dev] with the fast FLUX trainer on Replicate

Train the model to recognize and generate new concepts using a small set of example images, for specific styles, characters, or objects. It's fast (under 2 minutes), cheap (under $2), and gives you a warm, runnable model plus LoRA weights to download.

Featured models

bytedance / seedance-1-pro

A pro version of Seedance that offers text-to-video and image-to-video support for 5s or 10s videos, at 480p and 1080p resolution

bytedance / seedance-1-lite

A video generation model that offers text-to-video and image-to-video support for 5s or 10s videos, at 480p and 720p resolution

black-forest-labs / flux-kontext-max

A premium text-based image editing model that delivers maximum performance and improved typography generation for transforming images through natural language prompts

black-forest-labs / flux-kontext-pro

A state-of-the-art text-based image editing model that delivers high-quality outputs with excellent prompt following and consistent results for transforming images through natural language

runwayml / gen4-image

Runway's Gen-4 Image model with references. Use up to 3 reference images to create the exact image you need. Capture every angle.

black-forest-labs / flux-kontext-dev

Open-weight version of FLUX.1 Kontext

bytedance / seedream-3

A text-to-image model with support for native high-resolution (2K) image generation

kwaivgi / kling-v2.1

Use Kling v2.1 to generate 5s and 10s videos in 720p and 1080p resolution from a starting image (image-to-video)

ideogram-ai / ideogram-v3-quality

The highest quality Ideogram v3 model. v3 creates images with stunning realism, creative designs, and consistent styles

Official models

Official models are always on, maintained, and have predictable pricing.

I want to…

Generate images

Models that generate images from text prompts

Generate videos

Models that create and edit videos

Edit images

Tools for editing images.

Upscale images

Upscaling models that create high-quality images from low-quality images

Generate speech

Convert text to speech

Transcribe speech

Models that convert speech to text

Use LLMs

Models that can understand and generate text

Caption videos

Models that generate text from videos

Make 3D stuff

Models that generate 3D objects, scenes, radiance fields, textures and multi-views.

Restore images

Models that improve or restore images by deblurring, colorization, and removing noise

Generate music

Models to generate and modify music

Caption images

Models that generate text from images

Make videos with Wan2.1

Generate videos with Wan2.1, the fastest and highest quality open-source video generation model.

Use handy tools

Toolbelt-type models for videos and images.

Control image generation

Guide image generation with more than just text. Use edge detection, depth maps, and sketches to get the results you want.

Extract text from images

Optical character recognition (OCR) and text extraction

Chat with images

Ask language models about images

Sing with voices

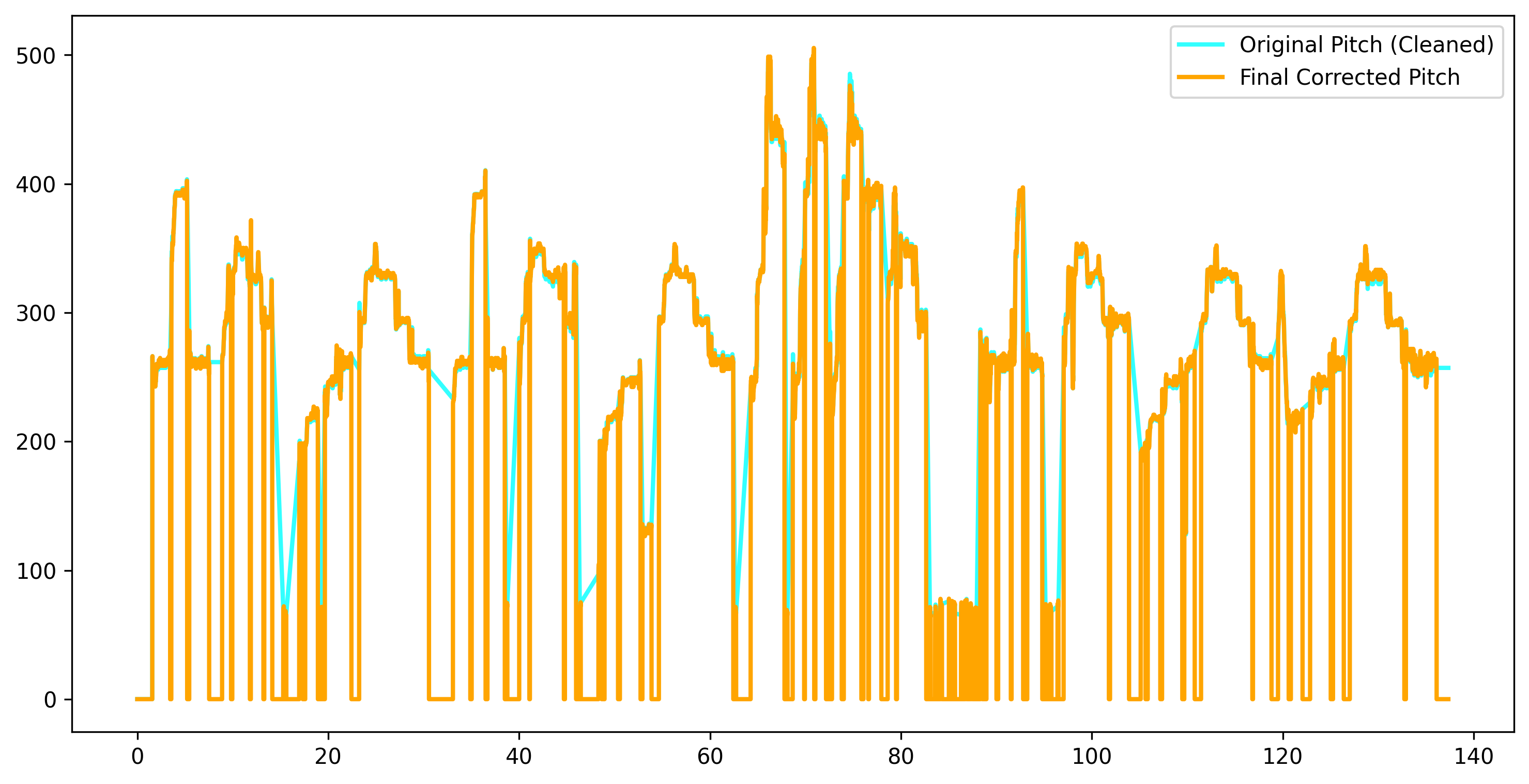

Voice-to-voice cloning and musical prosody

Get embeddings

Models that generate embeddings from inputs

Use a face to make images

Make realistic images of people instantly

Remove backgrounds

Models that remove backgrounds from images and videos

Try for free

Get started with these models without adding a credit card. Whether you're making videos, generating images, or upscaling photos, these are great starting points.

Use the FLUX family of models

The FLUX family of text-to-image models from Black Forest Labs

Use official models

Official models are always on, maintained, and have predictable pricing.

Enhance videos

Models that enhance videos with super-resolution, sound effects, motion capture and other useful production effects.

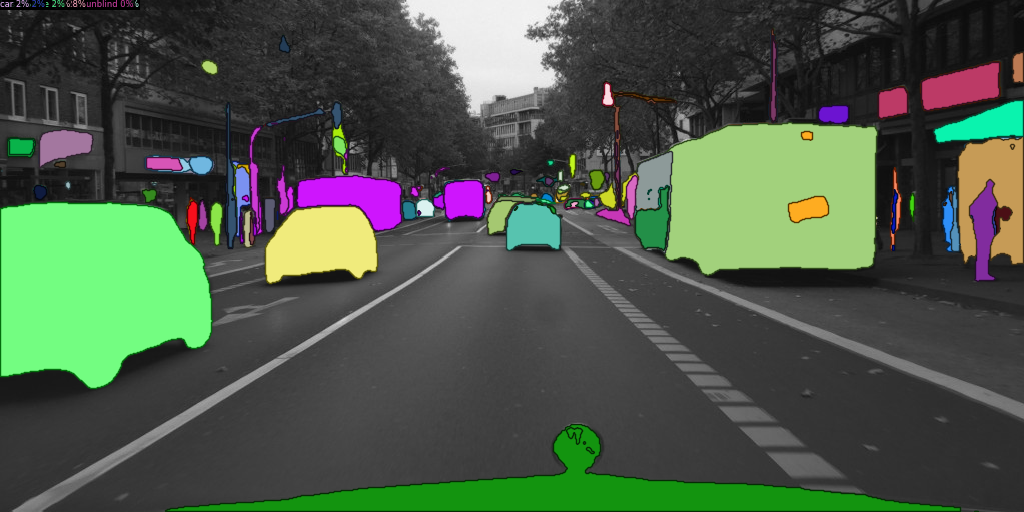

Detect objects

Models that detect or segment objects in images and videos.

Use FLUX fine-tunes

Browse the diverse range of fine-tunes the community has custom-trained on Replicate

Popular models

SDXL-Lightning by ByteDance: a fast text-to-image model that makes high-quality images in 4 steps

This is the fastest Flux Dev endpoint in the world, contact us for more at pruna.ai

Practical face restoration algorithm for *old photos* or *AI-generated faces*

whisper-large-v3, incredibly fast, powered by Hugging Face Transformers! 🤗

Practical face restoration algorithm for *old photos* or *AI-generated faces*

Return CLIP features for the clip-vit-large-patch14 model

Latest models

A pro version of Seedance that offers text-to-video and image-to-video support for 5s or 10s videos, at 480p and 1080p resolution

A video generation model that offers text-to-video and image-to-video support for 5s or 10s videos, at 480p and 720p resolution

GPU accelerated replay renderer / video data clipper for comma.ai connect's openpilot route data. SEE README.

[Quality Mode] Scaling Diffusion Models for High Resolution Textured 3D Assets Generation

Flux Content Filter - Check for public figures and copyright concerns

Open-weight version of FLUX.1 Kontext via Hugging Face Diffusers

A premium text-based image editing model that delivers maximum performance and improved typography generation for transforming images through natural language prompts

A state-of-the-art text-based image editing model that delivers high-quality outputs with excellent prompt following and consistent results for transforming images through natural language

Fast endpoint for Flux Kontext, optimized with pruna framework

Runway's Gen-4 Image model with references. Use up to 3 reference images to create the exact image you need. Capture every angle.

Use flux-kontext-pro to change the first or last frame of a video. Useful to use as inputs for restyling an entire video in a certain way

Audio-driven multi-person conversational video generation - Upload audio files and a reference image to create realistic conversations between multiple people

Generates unrestricted images from text prompts using a fine-tuned Stable Diffusion model

Recognise, describe and retrieve data within an image with great accuracy.

Run any ComfyUI workflow. Guide: https://github.com/replicate/cog-comfyui

A text-to-image model with support for native high-resolution (2K) image generation

A version of flux-dev, a text to image model, that supports fast fine-tuned lora inference

A 12 billion parameter rectified flow transformer capable of generating images from text descriptions

The fastest image generation model tailored for local development and personal use

The fastest image generation model tailored for fine-tuned use

Quickly detect nudity, violence, hentai, porn and more NSFW content in images.

Translate text from one language to another with support for multiple text formats.

Transform text into natural-sounding human-like AI voices with low latency and exceptional quality.

Uses DINO to detect regions and further refines them with SAM. Returns masking data as RLE encoded JSON.

Effortlessly search the Web and get access to high-quality results powered with AI.

Generate an image based on the given text by employing AI models like Flux, Stable Diffusion, and other top models.

Generate an image based on the given text by employing AI models like Flux, Stable Diffusion, and other top models.

Translate text from one language to another with support for multiple text formats.

OneFormer: One Transformer to Rule Universal Image Segmentation